[This was originally published on Medium April 12th 2016]

The internet has been stolen from you. Scooped out of your hands without much of a fuss. A small number of corporations are locked in a zero-sum game of land grabs. Your ideas, experiences and social connections, expressed digitally, are the land they grab. They have made billions of dollars by stealing your magic, and they steal it fresh every day. We’re going to put a stop to that. We’re going to reclaim the internet using the techniques that Gandhi used to defeat the British Empire. Let me explain how.

The value of a network increases exponentially based on the number of people connected to it. This fact is what fueled the explosion of creativity, enthusiasm and optimism around the world wide web in the 1990s. A revolution was happening. Anyone with a computer and gumption could create their own piece of real estate on that network. We were creating this amazing, powerful thing and everyone had a chance to be part of it. Then the “dot-com bubble” burst in 2000 and the story changed. While the rhetoric of silicon valley continued to talk about the web as something that benefits everyone, technology companies switched to creating closed, captive networks.

If the value of a network increases exponentially based on the number of people connected to it, then owning a network with a lot of people connected to it will make your company extremely valuable. The goal was no longer to create an inclusive, open, distributed global network. It was to create closed global networks that could be controlled and monetized centrally.

It’s investors paying young idealists to trap networks and turn them into profit engines, just like the Victorians trapped wild animals to put them in zoos.

After 2000 or 2001, with the rise of web 2.0, the dominant business model in Silicon Valley has been to accumulate large networks of users, hold their data captive, and then find ways to make money off that captive web of data. It’s a game of thrones — aristocracy vying for control of real estate. It’s investors paying young idealists to trap networks and turn them into profit engines, just like the Victorians trapped wild animals to put them in zoos. We, in aggregate, the networks of us, are the wild things that they captured. What happened to the explosive optimism of the www? It got wrangled when we allowed the web of data to become trapped in centralized servers.

We’re going to let the beasts out of their cages. You, me, all of us. We’re going to change the world by putting the power of networks back in the hands of everyone. The tide of technology has turned away from centralization. It’s picking up momentum in the opposite direction, towards decentralization. We’re going to ensure that tide makes the second world wide web — the web of data — truly decentralized. We’re reclaiming our data and by doing that we’re reclaiming the technological, social and economic power that comes from controlling those data. The data monopolies won’t be able to stop us.

The lesson of the 1990s was that an open, distributed, uncontrolled, global network is profoundly transformational. The lesson of the 2000s was that companies can make a lot of money by cultivating captive networks. The lesson of the 2010s will be that the bonds of digital captivity can be broken.

Learning from past efforts

This has been tried before. Notably, the diaspora* project set out with similar goals in 2010. A group of NYU students raised $200k on kickstarterto create diaspora*, a decentralized alternative to Facebook, saying “When you have a Diaspora seed of your own, you own your social graph, you have access to your information however you want, whenever you want, and you have full control of your online identity.” They proposed creating “the privacy aware, personally controlled, do-it-all distributed open source social network”. They received immediate accolades and thousands of interested participants.

Two years later, in 2012, the project had failed to get traction. One of the founders had died in 2011. The remaining founders walked away, joining Y-Combinator and leaving the Free Software Support Network to steward the project. The number of code contributors plummeted to one fifth of 2011’s peak participation. Today, in 2016, community members continue to contribute improvements but the project has lost nearly all of its momentum.

The diaspora* project was a dry-run. Their missteps show us how to succeed.

It didn’t work out, but have hope. They got a few key things wrong, the most important one being a mistake of arriving too early. The diaspora* project was a dry-run. Their missteps show us how to succeed. They stumbled on mistakes of language, timing, and architecture. We can do better. We should try again. This time it will work.

Language: Swadeshi, not Diaspora

Language is important, especially when you want to build something that touches many people from many cultures. Diaspora is the wrong word for what we’re talking about here. It hobbles the project from the moment that people hear its name.

I recently had a conversation with an old friend from high school who’s now a Rabbi in San Francisco. He gave me a very funny look when I asked “Have you heard of diaspora?”. The notion of diaspora has, after all, been at the center of the Jewish experience for nearly 3000 years. “Not that diaspora.” I clarified “The open source software project.” “No. Haven’t heard of it.” he replied, clearly skeptical about what he was about to hear. I felt a bit embarrassed that I was using the word diaspora in such a misaligned way.

Facebook is not anyone’s homeland, nor is Gmail, Twitter, LinkedIn, or Yelp. We are not talking about creating a diaspora. We’re talking about self rule.

A diaspora is a population that has migrated away from its homeland. The term is used almost exclusively to describe people who were driven out or taken from their homeland by force, usually referring either to the Jewish diaspora (Jews driven out of Israel) or the African diaspora (People taken from Africa by the North Atlantic slavery industry).

We don’t need a diaspora. We need a swadeshi movement.

Facebook is not anyone’s homeland, nor is Gmail, Twitter, LinkedIn, or Yelp. We are not talking about creating a diaspora. We’re talking about self rule. Specifically, we’re talking about something that Mahatma Gandhi calledswadeshi. Swadeshi is a sanskrit word that has come to mean “self-sufficiency”. It played a central role in Gandhi’s successful nonviolent campaign against British imperial rule in India. According to the Metta Center for Nonviolence:

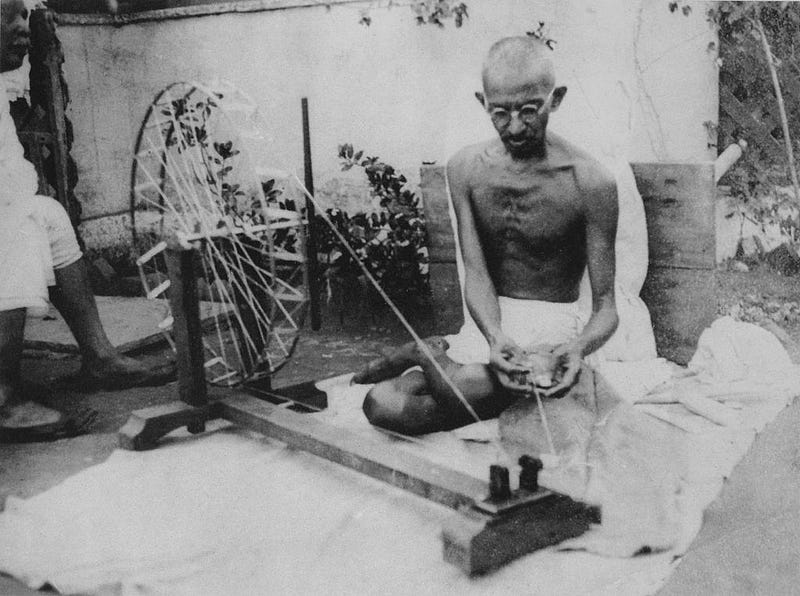

swadeshi is the focus on acting within and from ones own community, both politically and economically. In other words, it is the interdependence of community and self-sufficiency. Gandhi believed this would lead to independence (swaraj), as British control of India was rooted in control of her indigenous industries. Swadeshi was the key to the independence of India, and was represented by the charkha or the spinning wheel, the “center of the solar system” of Gandhi’s constructive program.”

The Indian people reclaimed control of their nation by claiming control over their economic productivity. The iconic example of this was the hand-driven spinning wheels (charkha) that they used to spin their own cloth, allowing them to clothe themselves without dependence on the British industrial system.

We don’t need a diaspora. We need a swadeshi movement. The first step is to create this movement’s spinning wheel, the charkha, the tool that allows us to spin the threads of our lives and communities locally while sharing them, shaping them, exchanging them, and creating value without relying on the machinery of far-away, centralized imperial rulers.

Timing: Both People and Technology Needed Time to Catch Up

The diaspora* project arrived too early. In 2010, the people weren’t there yet. The technology wasn’t there yet either. It took time for people to see the value of these data, and for the tides of technology to turn towards decentralization.

When the diaspora* project began in 2010 it was not clear to mainstream society how important or influential these technologies were. When commenting about social media, baby boomers and Gen-Xers alike would often say things like “I don’t care what you had for breakfast.” implying that platforms like Facebook and Twitter were mainly forums for meaningless, worthless chatter. Little did they know that they would become Facebook’s largest user base by 2016.

People see the patterns now. They’re talking about it and they don’t like it, but they don’t see an alternative.

In 2010 neither Facebook, LinkedIn nor Twitter was publicly traded. Facebook had 400 million users (6% of the world’s population), LinkedIn had 90 million users and Twitter had 26 million. Snapchat didn’t even exist yet. Now Facebook has 1.23 billion users (17% of the world’s population) and LinkedIn has 414 million. Facebook reaps roughly $12.76 in advertising revenue per user every year and works steadily to consolidate media traffic onto its platform. Meanwhile, it openly experiments with techniques for manipulating users’ emotional states. When disasters strike, people now turn to Facebook’s Safety Check to find out about friends and loved ones rather than turning to relief organizations like the Red Cross, who provide similar services.

In other words, Facebook has managed to monetize almost every aspect of our lives, even death and disasters. In the process it has eroded our social infrastructure, undermined our psychological health and threatened the sustainability of organizations & social patterns that exist to inform us, heal us, and help us survive disasters. People see the patterns now, and they don’t like it.

Mainstream internet users now see the economic and social value of these data, but they experience the data as something they don’t control. They experience data as something corporations and governments use for profit or power. They don’t see an alternative where individuals and communities can own the data about their lives. We need to create that alternative.

They set out to create something decentralized, but the tide of the tech industry pushed them towards the tools, techniques, and patterns of centralization.

The technology wasn’t ready yet either. In 2010 the tide of technology innovation was rushing gleefully in the direction of centralization — centralizing computation and storage into server farms that use virtualization to run massively scalable systems (a.k.a “the cloud”).

When those NYU students set out to create diaspora* they reached for the leading tools and techniques of the day, which are all optimized to run on cloud architecture, so it’s no surprise that the resulting software begs to be deployed as a cloud service. They set out to create something decentralized, but the tide of the tech industry pushed them towards the tools, techniques, and patterns of centralization.

Now the tide has turned away from the cloud and towards decentralization.

Now, thankfully, the tide has turned away from the cloud and towards decentralization. This might sound like a strange thing to say at the peak of cloud computing enthusiasm, but remember that if you’re swimming in an ocean at high tide, you’re surrounded by water. You have to watch the edges and the shallow spots to see the change. In those places you’ll see the water change direction or start to recede. We’re at the high tide of server farms and cloud computing. We’re drowning in it and the water will take a long time to recede, but the tide has turned.

The innovators of tech have turned their attention to technologies like filesystem containers (i.e. docker), hash trees (ie. git, bitcoin), and peer-to-peer replication patterns (ie. cassandra, bit torrent) — the building blocks of this new decentralized system. Most of this work was initially motivated by needs within cloud infrastructure (management of virtualized operating systems, large-scale software development/deployment, and high availability of databases), but the technologies have burst out of the boxes in which they grew.

Now the technology is ready. We’re ready too.

Architecture: Think Email and BitTorrent, not Web Servers

The diaspora* kickstarter campaign describes the intended product as “a personal web server that stores all of your information and shares it with your friends.”, a “seed” that “knows how to securely share (using GPG) your pictures, videos, and more.”

Personal web servers are not the solution. Running a web server is like owning a horse. It’s expensive, labor intensive and requires dedicated space (barns, server space).

The idea was that everyone would have their own diaspora* seed, and those seeds would be personal web servers.

Personal web servers are not the solution. Running a web server is like owning a horse. It’s expensive, labor intensive and requires dedicated space (barns, server space). When things go wrong, you need to hire specialists to diagnose the problems and try to fix them, and things always go wrong.Owning horses and running web servers are activities that only make sense as commercial undertakings or as expensive hobbies. We don’t need personal web servers. We need something that anyone can run on their phones, their laptops, or whatever other devices they cobble together, and we need those devices to communicate with each other in a decentralized, infinitely scalable, repeatable way. We need a peer-to-peer architecture, like email (SMTP) and BitTorrent (BEP).

We need a peer-to-peer architecture, like email (SMTP) and BitTorrent (BEP).

The solution is to decentralize further, relying on a peer-to-peer model. This is where the world wide web of the 1990s stops being a good example because it relies on HTTP, a client-server architecture. A better example is the email system, which pre-dates HTTP by a decade and relies on a peer-to-peer architecture. Everyone can run an email client and that email client is part of the giant peer-to-peer network that makes the global email system work. We still use servers in this setup (aka “mail servers”), but those servers function as peers, not central authorities, and no single email provider controls the whole network, nor can they gain control of it.

The other parallel to email becomes clear when you look at who holds the data. When I send an email to my friend Bess, once Bess receives that email, there are three copies of the email and its attachments — 1) the original email in my “sent mail”, 2) the copy on the server, and 3) the copy in Bess’ inbox. Any of us can walk away with all of our emails, maybe moving them into a new system that suits our needs better. Contrast this with a centralized model like Facebook. When I post something on Bess’ Facebook wall, only Facebook has a copy of that post. If Bess wants to see it, she has to go to Facebook and ask for it. If I want to see it again I have to do the same. They hold the data. We don’t. That’s the wrong way around.

We should each, as individuals, be able to hold the data we’ve created and the data that has been shared with us. Any constraints on how we use the data should be based on the arrangements between us and the people who created the data, just as Gmail doesn’t have any say in what Bess does with my email after she’s downloaded it from their mail server but I do have some say in what she does with it, both socially and in the eyes of the law.

Each person can create feeds of their “social” posts, a bit like RSS feeds, and allow the updates to replicate across the network of peers.

A Rough Sketch: Replicating Feeds of Social Data

How will it work? In short, create an app, one that you run on your computer or phone, that writes social data (aka “posts”) into local databases and then replicates those databases to peers using a peer-to-peer protocol like BitTorrent. You can think of these databases as “feeds” of data that you control. Each time you create a new post in a feed, edit an existing post, or delete an existing post, those changes in your feed will automatically replicate across the network to all the peers who have copies of the feed. This bypasses the need for a central server to house our data. it allows us to communicate directly with each other without losing the expressiveness and worldwide networked-ness of today’s social media platforms.

Check out this slide deck with diagrams explaining the basic ideas.

The technologies we will use to make this work are already coming together. The dat project’s hypercore module lets you put arbitrary content into Merkle DAGs (the same technique used by bitCoin and git to track the integrity of data) and replicate those DAGs across a network of peers using BitTorrent. The dat jawn team are working on the particulars of putting tabular data into that system. Once those two pieces are working, the MVP will be to 1) establish a first-blush data model for social posts, possibly based on the diaspora* data models, 2) create tools to index incoming feeds into local databases (leveldb, postgres, elasticsearch, etc.), and 3) start building applications that let you browse timelines, search through your network, create posts, comment on posts, etc.

Where to Go from Here

Want to get involved? Want more technical details? Go tohttps://github.com/swadeshi/wheel for more info, including info on how to get involved. You can also post ideas, questions, etc. in that project’s issues tracker.

If you want to get periodic updates on the project, sign up for the mailing list.

This post is dedicated to Aaron Swartz (1986–2013) and Ilya Zhitomirskiy (1989–2011).